Accurately measure displays to detect, quantify, and correct defects, improving device quality and production yield

Evaluate luminance, intensity, flux, CIE chromaticity, contrast, and more to characterize LEDs, backlit panels, and other light sources

Combine automated inspection with the precision of human visual perception to detect subtle surface and assembly defects

What do you need to measure?

Consumer Displays

Consumer Displays

Learn more

Automotive Lighting & Displays

Automotive Lighting & Displays

Learn more

Aerospace Lighting & Displays

Aerospace Lighting & Displays

Learn more

Augmented & Virtual Reality

Augmented & Virtual Reality

Learn more

Surfaces & Cover Glass

Surfaces & Cover Glass

Learn more

Facial & Gesture Recognition

Facial & Gesture Recognition

Learn more

Designed and manufactured at Radiant.

Installed and supported around the globe.

Featured Products

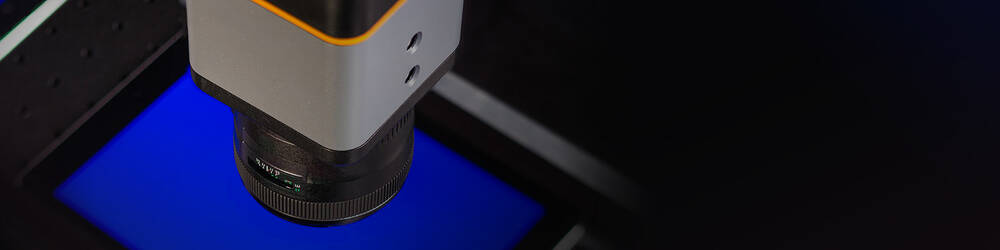

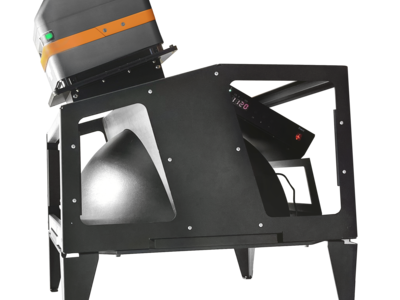

Award-Winning Near-IR Measurement System

Radiant's Near-Infrared (NIR) Intensity Lens has been recognized with multiple awards for its innovative application of Fourier optics and high-resolution imaging to measure the complete angular output of near-IR LEDs and lasers in less than a second, enabling production-level quality control of light sources for 3D sensing.