Ensuring the Quality of Next-Generation Automotive HUD

Automotive head-up displays (HUDs) project key information on the windshield, within a driver’s field of view, so they can keep their eyes on the road. HUDs first originated in the 1950s aerospace industry for military aircraft and are now used extensively in aircraft cockpits and pilot head-mounted (helmet) systems. Offering similar benefits for vehicle safety and operation, HUDs are now increasingly common in automobiles entering the market.

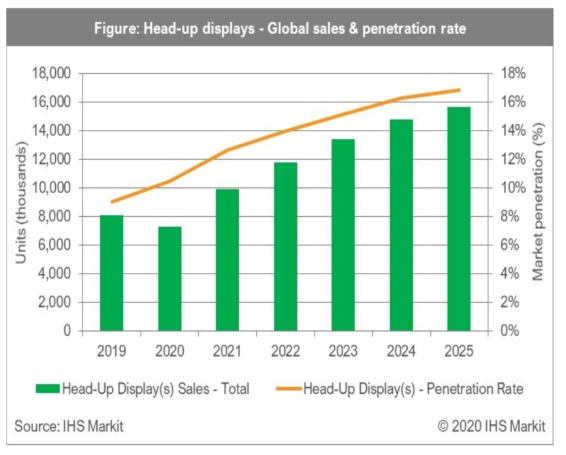

Industry analysts predict that integration of HUDs in new vehicle models will grow more rapidly than any other type of automotive display.1 A majority of these systems at first will be conventional two-dimensional (2D) HUDs, (sometimes called “Box-HUDs”), but 3D, holographic, and augmented reality (AR) HUDs are just around the corner.

Strong growth is projected for sales of HUDs and increasing penetration into the commercial vehicle market. (Image © IHS Markit)

HUDs project transparent graphic images onto reflective film on a car’s windshield, causing images to appear to be several meters in front of the driver; this is referred to as the virtual image distance (VID). The size of the projected display's vertical and horizontal field of view (FOV) is specified in degrees. The HUD eyebox is defined as the three-dimensional region in which the driver can see an entire display when moving their head to various positions; this area is limited in today’s conventional HUDs due to size limitations of the projection area on the windshield. As a result, the amount of information projected is also limited, typically to basic speed and navigational symbols.

Beyond FOV, conventional 2D HUDs have additional limitations: they don’t support overlaying graphics onto real-world objects, nor do they integrate with vehicle sensor data to create real-time human machine interface (HMI) capabilities that can enable meaningful placement of virtual images on the changing environment relative to the driver’s FOV.2 To expand HUD capabilities, developers are looking to the next generation of AR-HUD systems.

Augmented Reality HUD

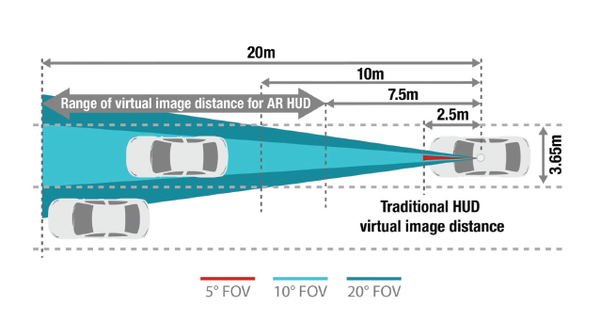

AR-HUD systems offer both a wider horizontal FOV and a longer distance projection (longer VID). Typically, AR HUD provides a functional viewing area at a minimum of 7 meters VID and 10° FOV (allowing graphical content of the virtual image to be projected across a broader scope of the visible environment).

Conventional HUD systems project a FOV just 5° wide out to 2.5-3 meters; AR-HUD systems can project from 7-20 meters, enabling integration of virtual images with real-world elements on the road. (Image: © Texas Instruments3)

AR-HUD systems project information so that it appears integrated with the real-world environment, such as placing directional indicators on the roadway ahead. These advanced systems also integrate sensor information, for example, showing lane departure warnings and guidelines to help a driver correct course.

An integrated AR-HUD lane departure warning helps guide a driver back on track (Image © Continental AG, Source)

The first commercially available AR HUD will be released to market in the 2021 model Mercedes Benz S-Class, available later this year. The system integrates various sensor inputs from the vehicle (such as the forward-looking radar and optical sensors) to provide alerts and information to the driver. For example, the HUD can provide information about the distance to the car ahead, markers delineating the edge of the road in low-light situations, or navigation help via directional arrows that appear overlaid on the driving lanes.

The Mercedes Benz 2021 S-Class AR system in action.

Critical Quality Considerations for Conventional and AR HUD

With the advent of next-generation HUD systems, display test equipment used to ensure virtual image quality is facing new demands. How does the process of HUD measurement change with the incorporation of 3D and AR-HUD systems, which project new types of virtual images, across larger fields of view, and at a range of depths?

Because HUDs are used while the vehicle is in operation, ensuring that images and characters are clear and legible is critical for safety. Industry regulations specify stringent optical requirements for HUD displays requiring manufacturers to carefully measure system visual performance to ensure compliance. To do this, automotive OEMs need effective display metrology systems, ideally an all-in-one HUD measurement solution capable of addressing 2D, 3D, and AR-HUD optical quality testing.

A Comprehensive, Automated HUD Testing Solution

Radiant Vision Systems provides a comprehensive hardware/software solution to enable fully automated testing of HUDs in automotive and other integrations. Engineered in response to OEM and supplier testing requirements (both custom requirements and those set forth by SAE and ISO organizations), the solution includes a ProMetric® Imaging Photometer, electronically controlled lens, and TT-HUD™ Software to enable rapid, automated visual inspection of HUD projections and virtual images.

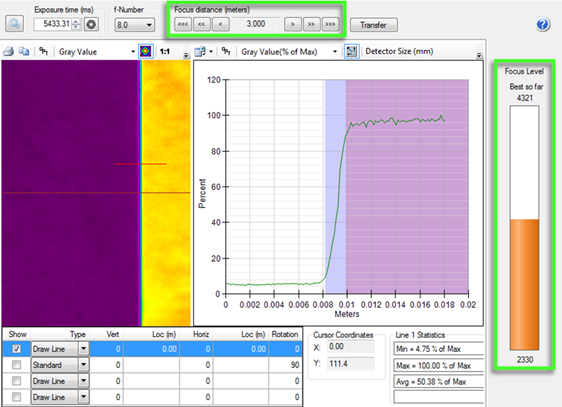

TT-HUD Software utilizes the electronically controlled lens of the connected ProMetric imaging system to automatically adjust focus to the HUD virtual image distance. When focus is achieved, a VID calculation gives the distance of the projected image in meters, enabling VID qualification and eliminating the need for manual distance measurements.

Watch this brief product demonstration to learn how the Radiant HUD measurement solution can test all qualities of optical HUD performance simultaneously, including the qualities of variable-distance projections as in AR-HUD systems.

In this video, you will learn about:

- The TT-HUD Software test suite for optical performance evaluation of brightness, color, contrast, MTF, ghosting, distortion, eyebox, and more.

- Using electronically controlled lenses and Radiant software to rapidly focus to variable-distance projections and automatically calculate VID in real-distance units.

CITATIONS

- Davis, K., “Automotive Display Market Overview and COVID-19 Impact”, IHS Markit presentation at Society for Information Display (SID) Display Week 2020, August 3, 2020.

- Firth, M., “Introduction to automotive augmented reality head-up displays using TI DLP® technology”, Texas Instruments, May 2019.

- Ibid.

Join Mailing List

Stay up to date on our latest products, blog content, and events.

Join our Mailing List