New Smart Helmets Incorporate HUDs Using AR/MR Displays

In a world where smartphones, smart speakers, and smartglasses have become commonplace, the development of smart helmets for consumers was almost inevitable. After all, protecting our heads from damage is a high priority for humans, and we’ve also become used to almost-anywhere connectivity from all our devices.

The term “smart helmet” is being used to describe virtually any type of helmet that has some electronic enhancement and/or network connection. Many motorcycle helmets now incorporate Bluetooth and audio speakers to integrate with the rider’s cellphone for communication, alerts, and music. New smart football helmets are emerging that include built-in sensors—or even electroencephalography (EEG) capabilities—that can detect head impacts and assess brain activity to provide a real-time alert if a player has received a concussion during training or game play.

Certain types of smart helmets—the focus of this blog post—are now adding a visual display component. Essentially, these new models combine the traditional protective role of a helmet with the connectivity and image display capabilities of smartglasses.

The market for these helmets—generally referred to as HUD (head-up display) helmets—is still emerging but is expected to boom as the technology and integration matures. The HUD helmet industry segment is projected to grow at a CAGR of 30% from just over $100 million in 2022 to more than $1 billion globally by 2030, with companies such as DigiLens, BWM Motors, and Jarvish as some of the key players.1

The BMW HUD motorcycle helmet concept.

Early Smart Helmets: Military Applications

Helmets that integrate with a HUD component are not actually new; they have been used by military pilots since 1980. Also known as head-mounted or helmet-mounted displays (HMDs), today’s military smart helmets include models designed to be worn by on-the-ground troops, often incorporating night vision and other advanced capabilities needed when on patrol or engaged in a combat situation.

For example, the US Army’s TAR (tactical augmented reality) helmet is designed to support tactical operations on the ground. It consists of “a small, one-inch-by-one-inch (2.5 x 2.5 cm) eyepiece that is mounted on a soldier's helmet. The eyepiece overlays a map onto the soldier's field of vision, instantly offering target information and GPS-tracked data showing where the rest of their team is located.”2 Wearing the helmet, soldiers no longer have to use a handheld GPS device. Plus, “the TAR device is wirelessly connected to a tablet worn on a soldier's waist and a thermal site mounted on their rifle. This means additional data, such as an image of the target or the distance to a target, can be displayed through the eyepiece.”3

Modern smart helmets for military pilots are designed to integrate with helicopters, fighter jets, and other aircraft. For example, the US Air Force’s F-35 Generation III Stryker Jet smart helmet (which we wrote about several years ago) incorporates a head tracker/transmitter unit (HTU), which tracks the pilot’s head movements to ensure that the camera images and other data displayed reflect the pilot’s line-of-sight (LOS) second by second. Some of the images are created using a fixed camera assembly (FCAM) unit outside the aircraft.

A display management computer (DMC) controls all helmet elements and is interfaced to multiple aircraft systems. The HMD “is built around a custom-fitted insert based on a 3D scan of the pilot’s head and combines noise-cancelling headphones, night vision, a forehead-mounted computer, and a projector.”4

Foot soldier with a Scorpion augmented reality HMD (left); the F-35 Stryker helmet (right). (Left image: public domain, photo credit: SSgt David Dobrydney / Right image Source)

However, with a price tag of roughly US$400K - $560K for the Stryker helmet (fully integrated with a $100 million F-35 fighter jet), these are obviously not products for consumer shoppers. But the market is moving in that direction: commercially oriented smart helmets are already being developed for motorcyclists and industrial users. At a minimum, most (85%) of consumer and industrial smart helmets provide wireless connection to other smart devices or cloud servers.5 They contain considerably less functionality than the Stryker helmet, obviously, but nevertheless some offer advanced technology.

Smart Helmets on the Road

For example, the most cutting-edge motorcycle helmets incorporate HUDs using augmented/mixed reality (AR/MR) technology. Like the HUDs in some automobiles and airplanes, key data such as speed and navigation information can be projected in front of the operator, in their line of sight so there’s no need take their eyes off the road. In automobiles, the HUD display surface is the interior of the car’s windshield. In smart helmets, it can be the interior of the transparent visor, or a smaller screen positioned in the wearer’s line of sight.

The latest CrossHelmet X1 for motorcyclists integrates a head-up display, sound, rear-view camera, touch panel control, a safety light, and smartphone connectivity. (Image © CrossHelmet)

Additional Smart Helmet Use Cases

Smart capabilities are being incorporated into headgear, hard hats, and helmets that are already worn in a variety of settings. For example, their use is being explored by law enforcement and security organizations incorporating functions such as thermal imaging (for COVID screening at airports) and facial recognition to identify criminal suspects. Smart helmets for firefighters incorporate thermal imaging to increase visibility in smoke-filled spaces.

The C-Thru helmet-mounted AR system (left) and the detailed imaging it provides to firefighters even in zero-visibility environments (right). Image source: CBS News.

Additional smart helmet designs are being explored for use in industrial and construction settings where workers already rely on helmets for safety. One smart construction helmet recently released to the market is the result of a partnership between Microsoft and Trimble, offering a HoloLens-hardhat mashup that lets construction workers view 3D overlays of building schematics and models.

Similarly, industrial designers Huwan Peng and Haoyu Liu have attracted attention for their design of the NOCTUA mixed-reality helmet, although it is still in the prototype phase.

The NOCTUA mixed-reality safety helmet. (Image Source)

One challenge for helmet makers is continuing to meet performance and component specifications of a given industry, while at the same time meeting the optical and visual performance demands of AR/MR display devices and HUDs. For example, motorcycle helmets—both traditional and smart—must conform to requirements from standards bodies such as the US Department of Transportation (FMVSS 218) and Economic Commission for Europe (ECE 22.05), meet ISO requirements, and pass Snell testing and/or CE product certification. Aimed at protecting the head from injury, specifications for helmet performance can include impact attenuation, energy absorption, penetration resistance, a minimum of 105° peripheral visibility on both sides, and flame resistance.

Face shields have their own set of standards, such as those published by the US Vehicle Equipment Safety Commission (VESC 8) or ECE 22.05J, ECE 22.05P, or ECE 22.05NP. Snell is an independent third-party certification that is optional but desirable for helmets. Their testing parameters for face shields include penetration resistance, which is judged by shooting an air rifle at three spots on the visor using a pointed lead pellet travelling at a velocity of approximately 500 kph (>300 mph). Any penetration means the face shield fails. Testing racing helmets additionally requires that a bump raised on the visor’s surface by the bullet must be no more than 2.5 mm.6

A helmet undergoes penetration testing (Image Source)

Although government regulations haven’t yet been enforced for automotive and motorcycle HUDs, manufacturers nevertheless need to conduct rigorous quality testing of helmet AR system components and performance to ensure user safety. The HUD/AR display surface (if separate from the visor) may not have to stop a bullet, but it should still be designed to avoid breakage under rugged road conditions and in the event of an accident.

At the same time, manufacturers must ensure that the HUD system and AR/VR display offer quality and performance that meets customer expectations. Projected images must be clear, bright, and legible in any setting, with no distortion or defects. Because these displays are located so close to the user’s eyes, tiny imperfections in image luminance, shape, or clarity can be glaringly obvious.

Quality Testing for HUD Smart Helmets

The function and performance of smart helmets is in part dependent on the display screen itself. If information is not displayed clearly, under all ambient lighting situations and operating conditions, then it won’t help—and can even endanger—the rider. Ensuring that HMD display screens meet industry standards, are free of defects, and function optimally requires careful testing during the production phase.

Although these helmets are HUDs, they are not like an automotive HUD that projects images away from the driver, onto a screen on the dashboard or the interior of the car’s windshield. A helmet’s display surface is located very close to the wearer’s eye–just millimeters away. Functionally, a smart helmet is more akin to AR/VR/MR headsets, known collectively as near-eye displays (NEDs). Thus, the measurement and testing requirements of these systems is closer to those of an AR/MR device.

Testing XR Display Devices

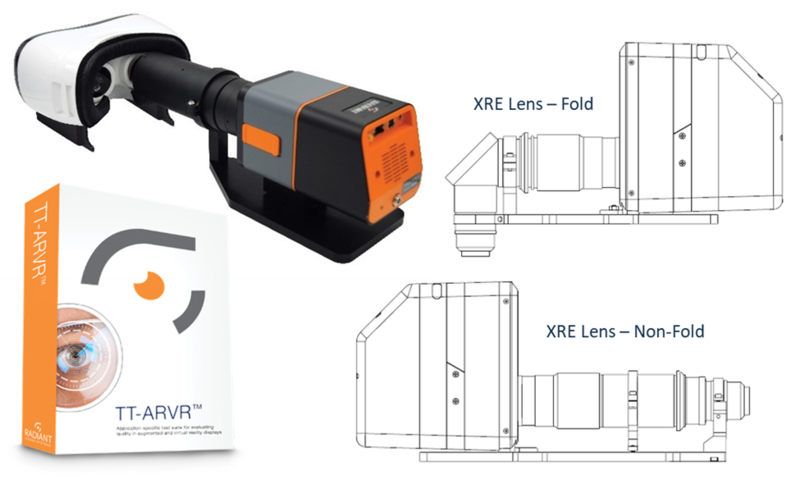

Radiant provides test and measurement systems designed to evaluate the quality of NED devices that are viewed close to the human eye. Our AR/VR Lens, paired with a Radiant ProMetric® Imaging Photometer or Colorimeter and software, provides a complete solution for accurate and efficient measurement of headset displays.

The lens design simulates the size, position, and field of view of the human eye. Unlike alternative lens options, where the aperture is located inside the lens, the aperture of the AR/VR Lens is located on the front of the lens, enabling positioning of the imaging system’s entrance pupil within headgear to view NEDs from the same location as the human eye. This is critical for evaluating the way display performance impacts visualization for the operator. The measurement lens must “see” the same image across the same field of view as the operator. This calls for wide field of view optics, which Radiant’s AR/VR Lens employs to capture up to 120° horizontal from a near-eye position.

Radiant’s TrueTest™ Software provides the leading display test algorithms, with the capability to sequence tests for rapid evaluation of all relevant display characteristics in a matter of seconds, applying selected tests to a single image captured by the camera. Standard tests in TrueTest include luminance, chromaticity, contrast, uniformity, mura (blemishes), pixel and line defects, and more. An application-specific TT-ARVR™ software module adds unique tests for AR/VR display analysis when used with Radiant’s AR/VR Lens. These include:

- Modulation transfer function (MTF) to evaluate image clarity based on Line Pairs, Slant Edge Contrast (ISO 12233), or Line Spread Function (LSF)

- Image integrity evaluation including Distortion, Focus Uniformity, and Warping analyses

- Spatial x,y position reported in degrees (°)

Scheduled for release in 2022, Radiant’s new XRE Lens offers an electronic focus functionality feature so users can easily adjust to measure multiple or dynamic focus on different points to match perceived image distances in the headset projection. This is beneficial where variable distance projections are a concern. The lens also features a a folded (“periscope”) design to measure from the pupil position in various headset form factors. This is especially useful for measuring headgear that is designed with hardware surrounding the head and leaves no linear entry point for standard optics to reach this position. This lens will solve this problem by allowing system optics to enter the headgear from beneath and then angle 90° to reach the pupil position.

Radiant’s AR/VR Lens shown with a ProMetric Y Imaging Photometer (top left), our TT-ARVR test software (bottom left), and a sneak preview of our new XRE Lens, shown with a ProMetric I Imaging Colorimeter (right).

As we look ahead to smart helmets of the future, we can expect many more technological bells and whistles. Some engineers are already incorporating AI into a “Smart Helmet 5.0” that could extend IoT capability to devices such PPE helmets (personal protective equipment) for healthcare workers.6 With the continual development of novel technologies and device capabilities, measurement and inspection solutions must also keep advancing.

For more information about quality testing the latest devices and components of AR, VR, and MR systems (collectively, XR), watch the webinar: “Novel Solutions for XR Optical Testing: Displays, Waveguides, Near-IR, and Beyond.” Presented by Photonics Media, the webinar provides guidance on XR optical performance testing, demonstrating novel technologies that emulate the human eye and are optimized for accuracy, speed, and ease of use at each stage of component development and production.

CITATIONS

- HUD Helmet Market by Connectivity, Component, Display, Outer Shell Material, Technology, End-User (Racing Professional, Personal Use), Function (Navigation, Communication, Performance Monitoring), Power Supply, and Region – Global Forecast to 2030. Report from Markets and Markets, December 2019.

- Haridy, R., “US Army’s TAR head-up display to give soldiers a tactical edge.” New Atlas, May 25, 2017.

- Ibid.

- Frasier, W., “The Mind-Blowing Tech Inside the $560,000 F-35 Fighter Jet Helmet.” Boss Hunting, April 9, 2019.

- Choi, YH, and Kim, Y., “Applications of Smart Helmet in Applied Sciences: A Systematic Review,” Applied Sciences, Vol 11, 5039. DOI 10.3390/app11115039

- Ilminen, G., “Motorcycle Helmet Standards Explained: DOT, ECE 22.5 & Snell.” Ultimate Motorcycling, April 8, 2013.

- Camperio-Jurado, I., et al., “Smart Helmet 5.0 for Industrial Internet of Things Using Artificial Intelligence,” Sensors (Basel), Vol 20(21): 6241. November 1, 2020. DOI 10.3390/s20216241

Join Mailing List

Stay up to date on our latest products, blog content, and events.

Join our Mailing List